AI is no longer a future workload. It is the defining force reshaping infrastructure strategy today. Training clusters, GPU-dense environments, and high-performance interconnects are driving unprecedented demand for compute capacity.

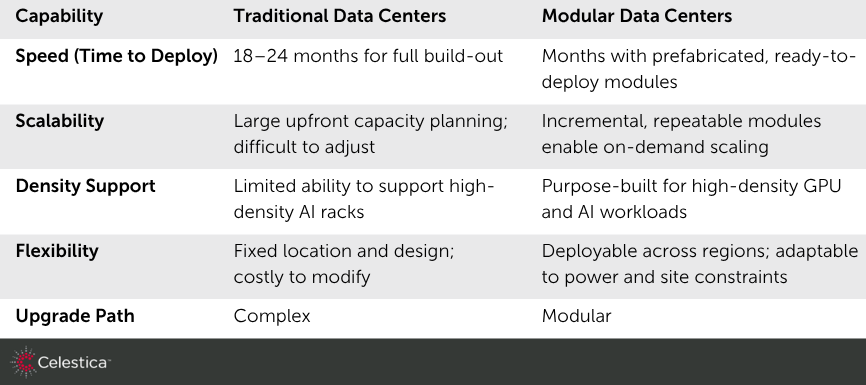

Traditional data center models, designed for steady and predictable growth, are struggling to keep pace. Facilities that once required 18–24 months to design and deploy are now fundamentally misaligned with AI innovation cycles, where infrastructure must

scale in months, not years.

AI workloads also introduce new design constraints. High rack densities, increased power draw, and advanced cooling requirements demand a level of infrastructure specialization that legacy environments were never built to support.

For hyperscalers, neocloud providers, and enterprise AI teams, the challenge is clear: infrastructure velocity must match the speed of AI innovation, or risk becoming a bottleneck to growth.

The Infrastructure Bottlenecks Slowing AI Expansion

Despite strong demand, scaling AI infrastructure is constrained by several systemic challenges.

- Talent scarcity is one of the most immediate barriers. Designing and deploying high-density AI environments requires specialized expertise across power engineering, thermal management, and rack integration. These skills are in limited supply globally.

- Density incompatibility presents another critical issue. Many existing facilities cannot support the power and cooling requirements of modern AI racks, forcing costly retrofits or entirely new builds.

- Velocity gaps further compound the problem. Conventional construction timelines delay time-to-value, making it difficult for organizations to respond to rapidly evolving AI workloads.

- Global scalability remains inconsistent. Multinational deployments require standardized, repeatable infrastructure models that can be deployed across regions without sacrificing performance or reliability.

Together, these challenges create a widening gap between AI ambition and infrastructure readiness.

Modular Data Centers: A New Model for Scalable Infrastructure

Modular data centers (MDCs) represent a fundamental shift in how hyperscalers design, build, and deploy infrastructure. Instead of constructing facilities entirely on-site, MDCs leverage prefabricated, factory-integrated modules that arrive ready for

rapid deployment.

Customers define the IT equipment needed and the deployment site, providing power and fiber connectivity. From there, Celestica delivers a fully integrated turn-key solution, handling design, rack integration, power distribution, cooling systems, system-level

validation and deployment.

Celestica’s approach transforms infrastructure deployment from a construction project into a productized, scalable solution, enabling organizations to deploy AI-ready environments with significantly greater speed and predictability.

Why Modularity Is Critical for AI Infrastructure

As AI workloads continue to scale, modularity is emerging as a critical enabler of next-generation infrastructure.

First, speed becomes a strategic advantage. Modular deployments dramatically reduce time-to-deploy, allowing organizations to bring AI capacity online faster and respond to evolving demand.

Second, MDCs provide scalable infrastructure blocks. Instead of overbuilding capacity upfront, organizations can expand incrementally, aligning infrastructure investment with actual workload growth.

Third, MDCs enable flexible capacity expansion across geographies. This is particularly valuable for capturing stranded power or optimizing real estate in regions where traditional builds are impractical.

Most importantly, MDCs are optimized for high-density AI workloads. Integrated power and advanced cooling designs support GPU-intensive environments, ensuring performance, efficiency, and reliability at scale.

In an era where infrastructure agility directly impacts AI competitiveness, modularity is becoming the preferred model.

Moving at the Pace of AI

AI infrastructure demand is only accelerating. As models grow larger and workloads become more distributed, the need for scalable, high-performance infrastructure will continue to intensify.

Modular data centers provide a clear path forward. By enabling faster deployment, standardized scalability, and regional flexibility, they allow organizations to keep pace with AI innovation while maintaining operational efficiency.

Looking ahead, infrastructure innovation will play a defining role in sustaining AI growth. Emerging architectures, open ecosystems, and evolving standards will further shape how modular solutions are designed and deployed at scale.

For organizations seeking to stay competitive in the AI era, the question is no longer whether to adopt modularity, but how quickly they can operationalize it.

Celestica’s Approach: From Design to Deployment

Celestica brings a design-led, product-minded approach to modular data center infrastructure, bridging the gap between concept and deployment.

Rather than treating infrastructure as a series of disconnected components, Celestica delivers end-to-end, turnkey solutions that integrate every layer of the stack:

- Application-aware infrastructure design aligned to AI workloads

- Seamless integration of racks, power, and cooling systems

- Global manufacturing scale to support rapid, high-volume deployment

- Supply chain resilience to ensure consistent delivery across regions

- Aftermarket solutions to support installation, commissioning & on-going maintenance

This integrated approach enables customers to move from design to deployment with greater confidence, reducing complexity while accelerating time-to-value.

By combining engineering expertise with global execution capabilities, Celestica helps organizations deploy infrastructure that is faster, more reliable, more scalable, and better aligned to the demands of modern AI environments.

To learn how Celestica enables faster AI infrastructure deployment through modular data center solutions, schedule a meeting with

one of our experts.